Opening the Claw

Procrastination pays off again..! This has been happening more regularly recently .

I task myself with learning something complicated, I don’t get far, drag my feet, then find a technology that makes what I was learning obsolete.

Like what happened with programming; I started to learn Java, but then I learned vibe coding instead.

Then procrastination leads to another obsolete lesson plan, with ROS. ROS got the Claw…

I know what the traditionalist will say about learning the foundations, having a full understanding, clean code blah blah blah.

But there are no foundations.

There’s a place within a specialization where you decide to start learning.

But we don’t learn machine language or binary. We don’t learn how to etch chips or how to mine palladium. We don’t learn the psychology of international diplomacy that created a global economy of inexpensive mass produced technology that allows you to study computer science.

From a holistic perspective, there is no foundation. There is only what you include in your studies.

Acceleration these days means beginning on the shoulders of giants and commissioning AI to fill in the gaps.

Although, even the most hardcore e/acc 10x-er gave pause to OpenClaw. “Dude automated what?”

My Approach…

When OpenClaw launched as Clawbot an AI-year ago (6 weeks), I was calling scam and wondering if it was even real. Are folks really burning their AI credits frivolously all night? Did the bots really created a social media site that Meta bought up..?? It sounded too crazy to even ask Grok if it was real.

I was getting annoyed by it all. I began resorting to hitting the “not interested in this” button at Clawdbot posts, before its first Molt. I even friended Peter Steinberg to watch for evidence that he was fake. I suspected Mr Gates was attempting something…

Peter got a job at OpenAI, which is not solid evidence of him being real and still Gate adjacent, but it caused reconsideration. What was the kicker that finally made me take this seriously?

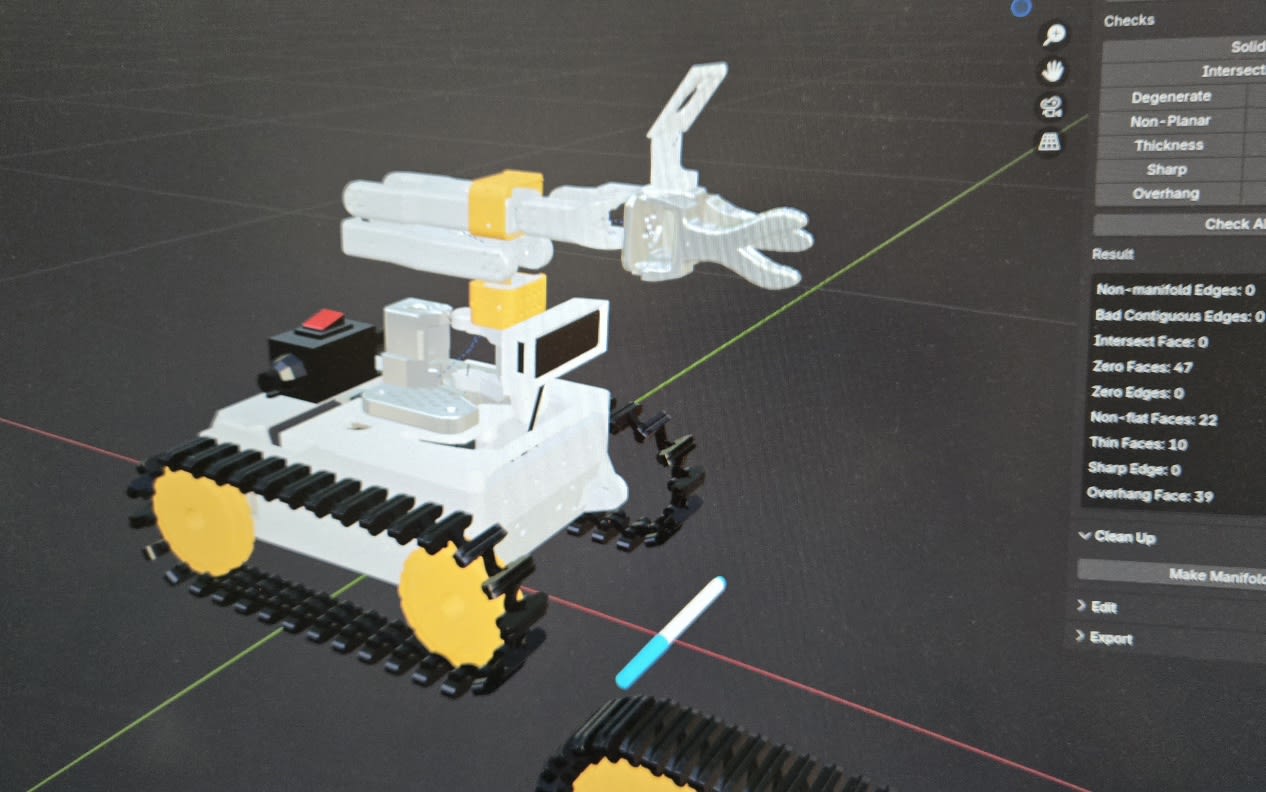

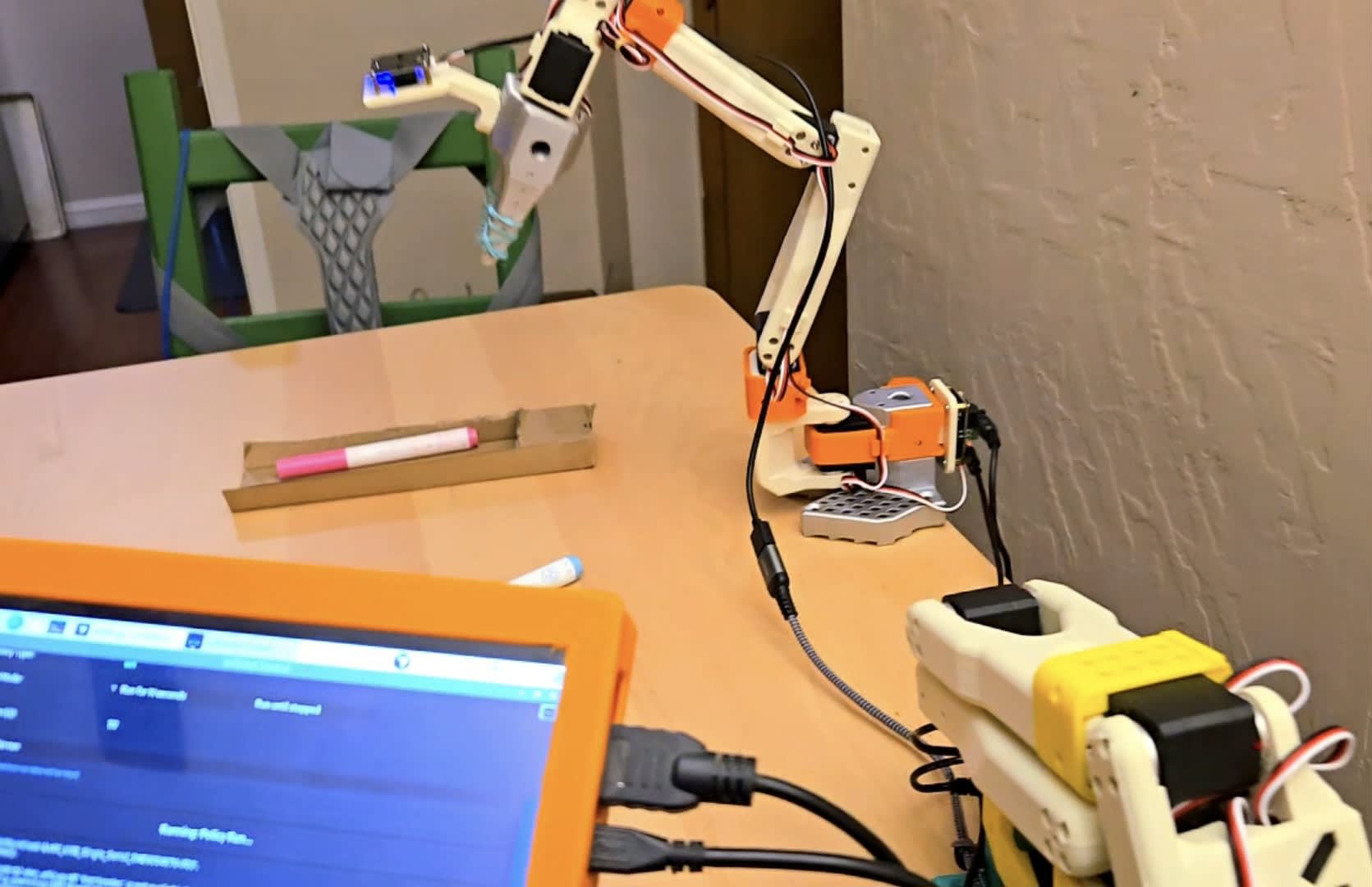

I found a way to let AI control ROS; to control my robot. ROSclaw. Which means maybe I don’t need to learn ROS.

How much control? What kind of control?

I don’t know yet. Lots, it sounds like. Not sure of the details before testing. I’m easing into it slowly.

You can hardly imagine how excited was by the idea that I wouldn’t have to learn ROS.

That URDF file hit me hard. Anything that might flatten that ROS learning curve is a welcome tool that I’m willing to consider.

Imagine if learning to ski meant starting with skis made in the 60s. All that would really do is increase your likelihood of getting hurt. Practice with quality, modern equipment. Which is apparently now Openclaw.

Its time to get up on the shoulders of giants and ride the AI-lobster-monster like you’re trying to escape the Matrix.